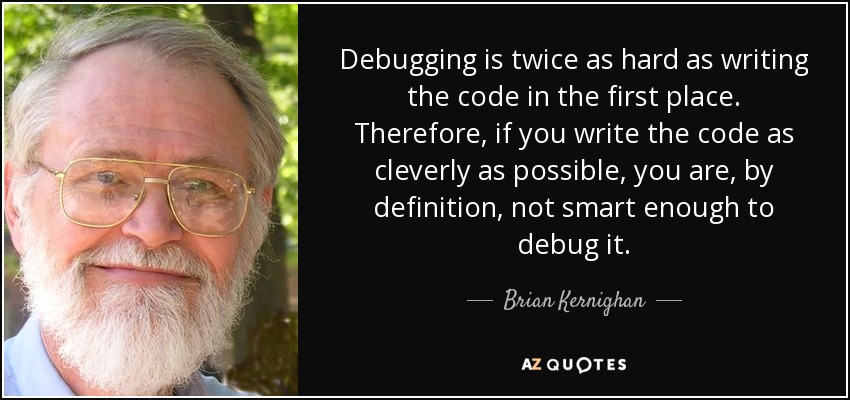

Of course they won’t be; somebody has to debug all the crap AI writes.

If, 24 months from now, most people aren’t coding, it’ll be because people like him cut jobs to make a quicker buck. Or nickel.

Well if it works, means that job wasn’t that important, and the people doing that job should improve themselves to stay relevant.

Oh perhaps the CEOs are the ones that need to be replaced?

Yup, notice nowhere did I say they shouldnt. People read and infer what they want

Define “works.”

Because the goals of a money-hungry CEO don’t always align with those of the workers in the company itself (or often, even the consumer). I imagine this guy will think it worked just fine as he’s enjoying his golden parachute.

Define “works”?

If you’re a CEO, cutting all your talent, enshittifying your product, and pocketing the difference in new, lower costs vs standard profits might be considered as “working”.

Hmmm maybe you’re misunderstanding me.

What I mean is “coding” is basically the grunt work of development. The real skill is understanding the requirements and building something efficiently. Tbh, I hate coding.

What tools like Gemini or ChatGPT brings to the table is the ability to create small, efficient snippets of code that works. We can then just modify it to meet our more specific requirements.

This makes things much faster, for me at least. If the time comes when the AI can generate more efficient code, making my job easier, I’d count that as “works” for me.

job wasn’t that important

I keep telling you that changing out the battery in the smoke alarm isn’t worth the effort and you keep telling me that the house is currently on fire, we need to get out of here immediately, and I just roll my eyes because you’re only proving my point.

Sure, believe what you want to believe. You can either adapt to what’s happening, or just get phased out. AI is happening whether you like it or not. You may as well learn to use it.

AI can’t do anything that hasn’t been done before. That’s never going to change.

It has already happened… And the results are a bit… “Cringe”

You can adapt, but how you adapt matters.

AI in tech companies is like a hammer or drill. You can either get rid of your entire construction staff and replace them with a few hammers, or you can keep your staff and give each worker a hammer. In the first scenario, nothing gets done, yet jobs are replaced. In the second scenario, people keep their jobs, their jobs are easier, and the house gets built.

Yup. Most of us aren’t CEOs, so we don’t have a lot of say about how most companies are run. All we can do is improve ourselves.

For some reason, a lot of people seem to be against that. They prefer to whine.

I get why you’re enthusiastic about AI. This whole comment reads like it was AI generated.

😜 👢

Like in Twitter?

Nah that was just a bad CEO

It will be interesting to find out if these words will come back and haunt them.

- “I think there is a world market for maybe five computers”.

- “640K ought to be enough for anybody.”

I’m curious about what the “upskilling” is supposed to look like, and what’s meant by the statement that most execs won’t hire a developer without AI skills. Is the idea that everyone needs to know how to put ML models together and train them? Or is it just that everyone employable will need to be able to work with them? There’s a big difference.

I’m going with the latter. Even my old college which is heavily focused on development is incorporating AI into the curriculum. Mainly because they’re all using it to solve their assignments anyway. Since it isn’t likely to go away and it’s a ‘tool’ they’ll have available when they hit the workforce they are allowing its use.

I’m not looking forward to seeing code written by some of these people in the wild. Most of the AI code I’ve seen is truly horrendous. I can’t imagine an entire business application of just strung together AI code being maintainable at all.

I’ll just leave this here cause this future reality is even worse since they likely don’t understand the code to begin with.

I know how to purge one off of a system, does that count?

I’m going to call BS on that unless they are hiding some new models with huge context windows…

For anything that’s not boilerplate, you have to type more as a prompt to the AI than just writing it yourself.

Also, if you have a behaviour/variable that is common to something common, it will stubbornly refuse to do what you want.

Have you ever attempted to fill up one of those monster context windows up with useful context and then let the model try to do some useful task with all the information in it?

I have. Sometimes it works, but often it’s not pretty. Context window size is the new MHz, in terms of misleading performance measurements.

I find there comes a point where, even with a lot of context, the AI just hasn’t been trained to solve the problem. At that point it will cycle you round and round the same few wrong answers until you give up and work it out yourself.

Guys selling something claim it will make you taller and thinner, your dick bigger, your mother in law stop calling, and work as advertised.

It’ll replace brain dead CEOs before it replaces programmers.

I’m pretty sure I could write a bot right now that just regurgitates pop science bullshit and how it relates to Line Go Up business philosophy.

ChatJippity

I’ll start using that!

if lineGoUp { CollectUnearnedBonus() } else { FireSomePeople() CollectUnearnedBonus() }I love how even here there’s line metric coding going on

I think we need to start a company and commence enshittification, pronto.

This company - employee owned, right?

I’m just going to need you to sign this Contributor License Agreement assigning me all your contributions and we’ll see about shares, maybe.

Yay! I finally made it, I’m calling my mom.

Sure, Microsoft is happy to let their AIs scan everyone else’s code., but is anyone aware of any software houses letting AIs scan their in-house code?

Any lawyer worth their salt won’t let AIs anywhere near their company’s proprietary code intil they are positive that AI isn’t going to be blabbing the code out to every one of their competitors.

But of course, IANAL.

The LLMs they train on their code will only be accessible internally. They won’t leak their own intellectual property.

Will that not be more experiensive than having developers?

Yeah which is why this is a dumb statement from Amazon. But then again I don’t expect C-suite managers to really understand the intricacies of their own companies.

Depends on the use case. Training local llms is a lot cheaper after Galore and there are ways to get useful local models with only a moderate amount of effort, see e.g. augmentoolkit.

This may or may not be practical in many use cases.

24 months is pretty generous but no doubt there will be significantly less demand for junior developers in the near future.

Of course not. It will be more expensive and they’ll still have to pay developers to figure out what’s wrong with their AI code.

Possibly. It’s hard to know without seeing the numbers and assessing output quality and volume.

Also it’s not unheard of that some bigwig wastes millions of company €€ for some project they fancy. (Billions if they happen to be Elon)

If only we had an overarching structure that everyone in society has agreed exists for the purposes of enforcing laws and regulating things. Something that governs people living in a region… Maybe then they could be compelled to show exactly what they’re using, and what those models are being trained with.

Oh well.

That’d be an exciting world, since it’d massively increase access to software.

I am also very dubious about that claim.

In the long run, I do think that AI can legitimately handle a great deal of what humans do today. It’s something to think about, plan for, sure.

I do not think that anything we have today is remotely near being on the brink of the kind of technical threshold required to do that, and I think that even in a world where that was true, that it’d probably take more than 2 years to transition most of the industry.

I am enthusiastic about AI’s potential. I think that there is also – partly because we have a fair number of unknowns unknowns, and partly because people have a strong incentive to oversell the particular AI thing that they personally are involved with to investors and the like – a tendency to be overly-optimistic about the near-term potential.

I have another comment a while back talking about why I’m skeptical that the process of translating human-language requirements to machine-language instructions is going to be as amenable as translating human-language to human-consumable output. The gist, though, is that:

-

Humans rely on stuff that “looks to us like” what’s going on in the real world to cue our brain to construct something. That’s something where the kind of synthesis that people are doing with latent diffusion software works well. An image that’s about 80% “accurate” works well enough for us; the lighting being a little odd or maybe an extra toe or something is something that we can miss. Ditto for natural-language stuff. But machine language doesn’t work like that. A CPU requires a very specific set of instructions. If 1% is “off”, a software package isn’t going to work at all.

-

The process of programming involves incorporating knowledge about the real world with a set of requirements, because those requirements are in-and-of-themselves usually incomplete. I don’t think that there’s a great way to fill in those holes without having that deep knowledge of the world. This “deep knowledge and understanding of the world” is the hard stuff to do for AI. If we could do that, that’s the kind of stuff that would let us create a general artificial intelligence that could do what a human does in general. Stable Diffusion’s “understanding” of the world is limited to statistical properties of a set of 2D images; for that application, I think that we can create a very limited AI that can still produce useful output in a number of areas, which is why, in 2024, without producing an AI capable of performing generalized human tasks, we can still get some useful output from the thing. I don’t think that there’s likely a similar shortcut for much by way of programming. And hell, even for graphic arts, there’s a lot of things that this approach just doesn’t work for. I gave an example earlier in a discussion where I said “try and produce a page out of a comic book using stuff like Stable Diffusion”. It’s not really practical today; Stable Diffusion isn’t building up a 3D mental model of the world, designing an entity that stably persists from image to image, and then rendering that. It doesn’t know how it’s reasonable for objects and the like to interact. I think that to reach that point, you’re going to have to have a much-more-sophisticated understanding of the world, something that looks a lot more like what a human’s looks like.

The kind of stuff that we have today may be a component of such an AI system. But I don’t think that the answer here is going to be “take existing latent diffusion software and throw a lot of hardware at it”. I think that there’s going to have to be some significant technical breakthroughs that have not happened yet, and that we’re probably going to spend some time heading down dead-end approaches before we get to that. There’s probably going to be a lot of hard R&D before we get there, and that’s going to take time.

-

Says the person who is primarily paid with Amazon stock, wants to see that stock price rise for their own benefit, and won’t be in that job two years from now to be held accountable. Also, who has never written a kind of code. Yeah…. Ok. 🤮

I guess the programmers should start learning how to mine coal…

We will all be given old school Casio calculators a d sent to crunch numbers in the bitcoin mines.

All the manufacturers of mechanical keyboards just cried 🥺

I know just enough about this to confirm that this statement is absolute horseshit

Sounds like the no-ops of a decade ago and cloud will remove the need for infrastructure engineers. 😂🤣😂🤣😂🤣😂😂😂🤣

SHUT UP AND GO BACK TO OUR SHITTY YAML BASED INFRASTRUCTURE!

Fuck yml, all my homies hate yml

JSON or bust!

Ok, since YAML is a superset, I can just put the JSON into the YAML.

That sounds cursed.

Please don’t 🙏🏻

🤣😂😪😥😢😭

It isn’t that AI will have replaced us in 24 months, it’s that we will be enslaved in 24 months. Or in the matrix. Etc.

Will the matrix it puts us in be in 1999? Because I’d take that deal.

Matrix lookin pretty good rn - 1999, stable climate, free apartment, 90s gf (she loves u) etc

How will federal contracts work?

Seriously how can these CEOs of a GPU company not talk to a developer. You have loads of them to interview

They obviously have to sell something. I doubt that they honestly think that this will happen.